The role, benefits, and challenges of AI chatbots in the medical field.

The seemingly irresistable march of Artificial Intelligence is increasingly making dramatic inroads into the realm of medicine. In this article, we explore the role, benefits, and challenges of AI chatbots in the medical field.

Whilst advocates of AI chatbots cite the avantages of enhancing accessibility, streamlining operations, and supporting both patients and providers through intelligent automation, critics say that there is increasing risks to patient safety through inaccurate medical advice, potential misdiagnosis, data privacy breaches, and high-stakes algorithmic biases. They also cite that chatbots lack clinical empathy and an inability to interpret complex patient history that may lead to a dangerous over-reliance or harmful, * “hallucinated” treatment recommendations.

* Hallucinated medical treatments in this context refer to inaccuracies, invented data, or errors generated by Artificial Intelligence (AI) tools — such as transcription tools or large language models (LLMs) — when processing patient data, clinical notes, or medical research. These "hallucinations" create plausible but false, unverified, or entirely invented medical diagnoses or treatment plans, posing significant safety risks in healthcare settings.

The role of AI in Healthcare

Artificial intelligence in healthcare improves diagnostic accuracy, personalizes patient treatment, and automates administrative tasks. Key roles include analyzing medical imaging (e.g., detecting cancers or fractures), powering drug discovery, optimizing operational efficiency through predictive analytics, and enabling virtual health assistants to enhance patient care.

Benefits of AI chatbots in medicine

AI chatbots are rapidly transforming the healthcare landscape by enhancing accessibility, streamlining operations, and supporting both patients and providers through intelligent automation.

As their capabilities grow, thoughtful AI chatbot development is proving essential for managing routine tasks, delivering accurate health information, and improving patient engagement, all while maintaining data privacy and regulatory compliance.

The top chatbots in 2026 showcase just how far this technology has come, offering scalable, cost-effective, and personalized healthcare support.

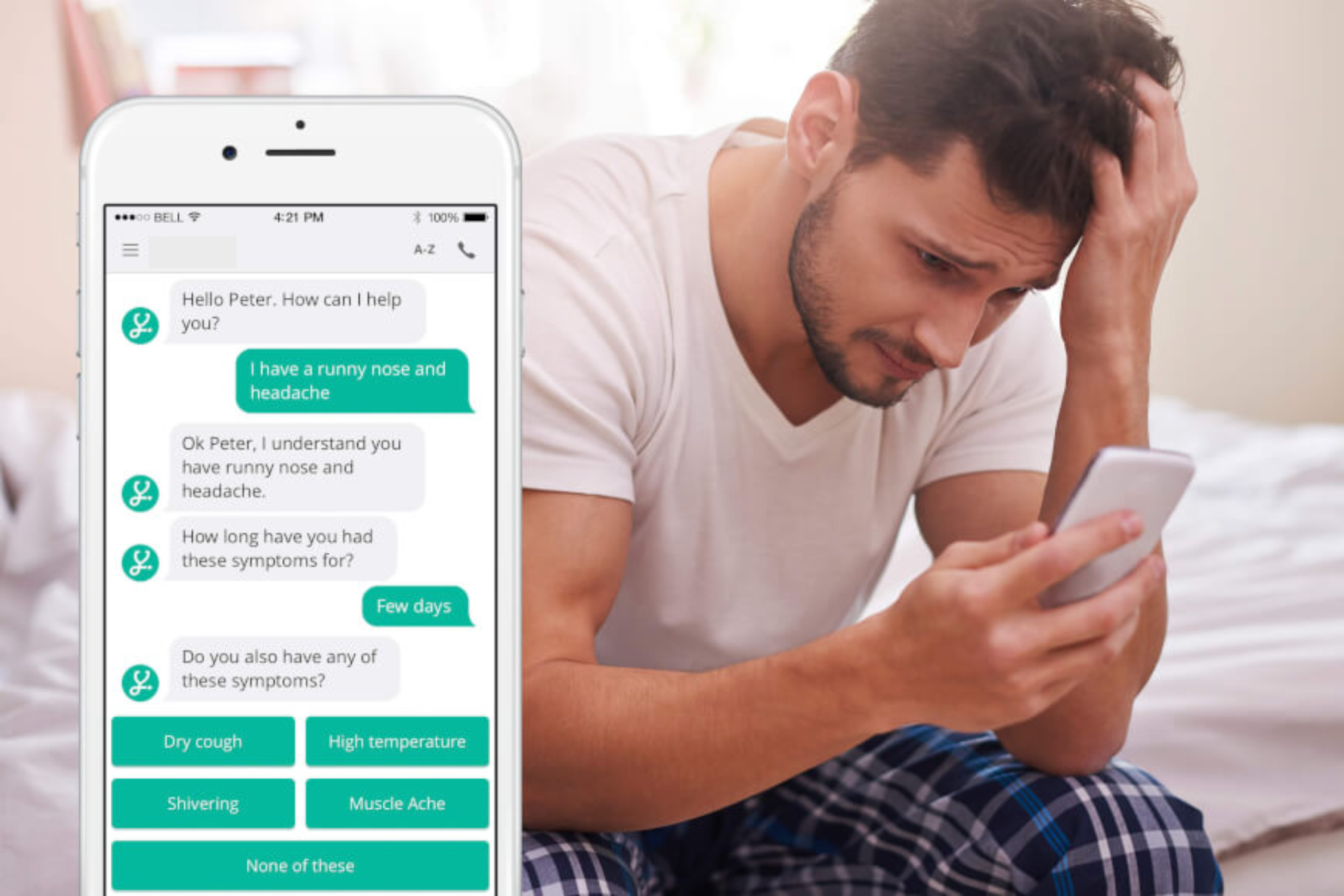

As the industry continues to evolve, AI chatbots will play a pivotal role in creating a more efficient, responsive, and patient-centric healthcare system byimproving healthcare efficiency and patient outcomes by offering 24/7 access to information, automating administrative tasks like appointment scheduling, and providing (preliminary) symptom triage. They enhance patient engagement, support mental health monitoring, and assist clinicians with clinical decision-making. These AI tools also analyze patient data to provide personalized care, such as medication reminders and follow-up care instructions.

Key Benefits of AI Chatbots in Medicine:

- Improved Accessibility and Triage:

Chatbots provide 24/7 access to information, allowing for instant symptom checking and triage, which helps patients determine if they need urgent care and streamlines the booking of appointments. - Operational Efficiency:

By handling routine tasks such as scheduling, billing inquiries, and insurance inquiries, AI chatbots lower costs and reduce the administrative burden on healthcare staff. - Enhanced Patient Care and Engagement:

They provide personalized health guidance, medication reminders, and monitor patients through wearable data analysis, leading to better compliance with treatment plans. - Mental Health Support:

Specialized AI chatbots offer consistent emotional support, mood tracking, and coping exercises, providing a safe, accessible first step for therapy. - Clinical Decision Support:

AI tools can assist clinicians by analyzing clinical notes and data, identifying potential diagnoses, and helping with clinical decision-making, such as managing medication dosage. - Improved Patient Experience:

By reducing wait times and providing instantaneous responses, chatbots increase patient satisfaction, say technology providers such as Coherent Solutions and John Snow Labs.

AI tools are meant to "support, not replace, medical care".

Challenges of AI in the medical field

In an article on the WEF website the author, Gabriel Onuh, wrote,

“Artificial intelligence (AI) is rapidly transforming healthcare. From early cancer

detection to personalized treatment recommendations, AI systems seek to make medicine faster, more precise and more affordable.

In high-income countries, these tools are already being tested in hospitals, research centres and clinics, and the results are impressive. Yet, for most of the world’s population, nearly

5 billion people living in low and middle-income countries, the benefits of medical AI remain out of reach.

Instead of narrowing health inequality, current approaches to AI development risk widening it. Most AI health systems are built on data sourced from a narrow set of populations, which means

billions of people, mainly from the Global South remain largely invisible in diagnostic models, risk assessments and treatment algorithms.”

An article published on PubMed Central in January 2025, asserts that healthcare may be transformed by AI, but its success requires ethical and responsible use. To meet the changing demands of the healthcare sector, and guarantee the responsible application of AI technologies, the authors highlight the necessity of ongoing research, instruction, and multi-disciplinary cooperation. They go on to say that responsible integration of AI will be essential to optimizing its advantages and reducing related dangers.

What are the challenges of employing AI chatbots in Healthcare?

Artificial Intelligence (AI) may be highly advanced and tireless, but Health chatbots generally lack ‘the human touch’, defined as genuine empathy, emotional insight, and the ability to understand non-verbal cues. While they can simulate empathetic language and offer 24/7 support, they operate based on algorithms and training data rather than true human understanding or compassion; therefore, AI may never fully replace certain jobs that require qualities beyond software capabilities, such as empathy or the ability to make complex real world decisions.

Here are some reasons given for the limitations, and capabilities, of health chatbots regarding the “human touch”:

Why Chatbots Lack the Human Touch

- Absence of Genuine Empathy:

AI cannot feel emotions, so its “empathy” is simulated, which can sometimes feel inauthentic or “fake” to users. - Inability to Detect Nuance:

Chatbots often miss non-verbal cues, tone, and body language, which are essential for identifying a patient's true emotional state. - Lack of Contextual Understanding:

They struggle with complex, nuanced, and high-acuity situations where a human clinician or therapist would know when to push or when to “hold space”. - “Computerised Mirror” Effect:

Chatbots often just reflect back user thoughts, whereas a human clinician or therapist reinterprets and helps a patient navigate their emotions. - Safety Risks:

Chatbots may fail to recognize suicidal intent, or leading questions or phrases that, in some cases, lead to providing harmful, inappropriate advice.

Where Chatbots can Offer Value

- Accessibility and Convenience:

They are available 24/7, providing immediate support, which is useful for routine queries or basic health monitoring. - Non-Judgmental Space:

Many users find it easier to discuss sensitive topics with an AI that doesn't judge, reducing the stigma associated with seeking help. - Consistency:

AI provides consistent, standardized information, which can be helpful for tracking health behavior goals.

The Verdict on “Human Touch”

While some studies suggest users find AI responses to be more empathetic in text-only scenarios, this is often perceived as lower in “authenticity” compared to human care. The consensus is that AI is best suited as a tool to support, not replace, human healthcare professionals, particularly in fields like mental health where human connection is paramount.

What is ‘Correct’ Information?

When it comes to health It is now becoming more and more challenging for an ordinary person to decide what to believe and what not.

Using AI medical chatbots can be a helpful way to understand medical terminology or prepare for appointments, but experts warn that relying on them for diagnosis or treatment is dangerous. These tools can provide inaccurate, inconsistent, or outdated information that may lead to harmful delays in seeking professional care.

In a recent article, published on PubMedCentral, the authors stated,

“The risk of misinformation and errors is a significant concern, particularly in health care where accuracy is critical. The one-size-fits-all

approach of LLMs [Large Language Models] may not align well with the nuanced needs of patient-centered care in the health sector.”

Here are the key things to be wary of when using AI medical chatbots:

- Inaccuracy and “Hallucinations” (Fabricated Information)

- Confident Misinformation:

AI chatbots often present incorrect information with high confidence. - Hallucinations:

Chatbots can invent medical studies, citations, or URLs that do not exist, or make up entirely false facts. - Vulnerability to False Inputs:

If a user includes incorrect information in their query, the chatbot may accept it as fact and build upon it, providing an incorrect diagnosis.

- Confident Misinformation:

- Lack of Clinical Context and Physical Exams

- No Physical Examination:

Chatbots cannot perform physical exams, check blood pressure, or feel symptoms, which are crucial for accurate diagnoses. - Missing Medical History:

A chatbot does not know your full medical history, allergies, or genetic factors unless you provide them, and even then, it may not understand the full picture. - Incorrect Context:

A chatbot might provide a technically correct answer that is medically inappropriate because it lacks the context of your specific situation. - Inconsistent Advice: The same query asked in slightly different ways can produce completely different advice, leading to inconsistency in potential treatment decisions.

- No Replacement for Human Expertise: Experts warn that AI is not equipped to replace the nuanced understanding of a human doctor, who can recognize critical details that a chatbot might overlook.

- No Physical Examination:

- Serious Safety Risks

- Dangerous Delays in Care:

A 2024 study reported a case of a patient suffering a life-threatening delay in treating a “mini-stroke” (transient ischemic attack) after relying on an incorrect chatbot diagnosis.

- Inappropriate Advice:

AI tools have been known to suggest dangerous treatments or fail to identify when symptoms are urgent. - Drug Interaction Mistakes:

Chatbots may incorrectly identify drug interactions, such as wrongly claiming it is safe to combine medications that could cause a dangerous drop in blood pressure.

- Dangerous Delays in Care:

- Privacy and Data Security Issues

Conversations with general AI chatbots, such as ChatGPT and Gemini, are often not protected by, for example, HIPAA [Health Insurance Portability and Accountability Act], which ensures patient privacy in the US. ChatGPT (OpenAI) and Gemini (Google) are increasingly subject to regulation. The regulatory landscape is evolving, fragmented, and varies by region. They are not yet fully regulated by a single, global AI law. Instead, they are governed by existing data protection, consumer privacy, and emerging AI-specific regulations.

Many AI companies use user chat data to train their models. This means sensitive health information could be stored or used by the company. Entering personal health information into chatbots increases the risk of data being exposed or hacked. The European Union is implementing the AI Act. This act classifies tools like ChatGPT and Gemini as “general-purpose AI models”, requiring them to comply with strict transparency rules and risk assessments.

As of late 2025/early 2026, there is no comprehensive federal AI law in the U.S., but individual states are acting. For example, Colorado has passed legislation setting requirements for high-risk AI systems to avoid discrimination. - Inherent Biases

AI models can reflect biases in their training data. This can lead to different recommendations based on race, gender, or socioeconomic status. Some models express stigma toward people with mental health conditions. This may lead to inappropriate advice that could harm users.

Tips for Safe Use of AI chatbots

Always check medical claims with a licensed healthcare professional or reputable source, such as the CDC or NIH.

Do not use AI for emergencies. Seek immediate medical attention if you have severe symptoms.

Do not share personal information, such as name, address, Social Security number, or detailed medical records, with a chatbot.

Use AI as a tool for basic understanding rather than a primary source of advice.

Comment:

The development of Artificial Intelligence over the last decade has been nothing short of meteoric. Over the last 20 years (2006–2026), AI has transitioned from narrow,

rule-based systems to transformative generative AI.

Key milestones include the rise of Machine Learning (ML) and deep learning (2010s), the 2012 image recognition breakthrough, the 2017 Transformer architecture, and the 2022 explosion

of Large Language Models (LLMs) like Google's Gemini and OpenAI's ChatGPT, driven by massive datasets and computing power. The exponential growth in the collection of data,

through the use of mobile phones, has given developers access to phenominal amounts of data; in 2020 it is estimated that data generation/replication exceeded 64 zettabytes

(a zettabyte is 1 Billion Gigabytes). A single zettabyte could store roughly 250 billion DVDs or 36 billion hours of HD video.

IBM What is a transformer model?

The challenge for users of health chatbots is to determine whether the information we are receiving is 'correct' or not. How do we do this? The answer is to use our undestanding of Buddhi.

Read our article on Buddhi here: Buddhi, Free Your Mind

Use a 3 - step approach:

- Analyze the Source:

Is it motivated by true wisdom? Ask the question(s) you have in different ways to determine what information or advice is returned each time. Use different chatbots and ask the same questions. If the answers or advice are substantially the same then plan your actions accordingly. - Cross-Reference with Experience:

Does the information returned align with your lived experience? Examine the information with mindful observation. - Evaluate Consequences:

Is the information or advice helpful or harmful in the long term? What could happen if I follow the advice without question?

Natural Health Evidence Based recommends using AI health chatbots only as a tool to assist you in your quest for information. DO NOT rely on AI to provide you with a

diagnosis or cure.

Seek the immediate assistance of a registered doctor, or healthcare professional, for medical advice and treatment in all cases of illness or injury.

Further reading

PubMedCentral Roles, Users, Benefits, and Limitations of Chatbots in Health Care: Rapid Review

John Snow Labs Why Medical Chatbots Are Essential in Modern Healthcare. Haziqa Sajid, Data scientist and technical writer, John Snow Labs.

PubMedCentral Artificial Intelligence (AI) Chatbots in Medicine: A Supplement, Not a Substitute. Editorial Cureus. 2023 Jun 25;15(6):e40922. doi: 10.7759/cureus.40922

Stanford University Study suggests physicians make better decisions with help of AI chatbots. Stanford University, February 7th, 2025.

Synapxe 5 Examples of AI in Healthcare, its Challenges and Future Trends. 13 Nov 2025

LiveChatAI How to Use AI Chatbots for Healthcare - 17 Best Practices

PubMedCentral The Impact of Artificial Intelligence on Healthcare: A Comprehensive Review of Advancements in Diagnostics, Treatment, and Operational Efficiency. PubMed Central, 2025

Oxford University New study warns of risks in AI chatbots giving medical advice

Nature Medicine Reliability of LLMs as medical assistants for the general public: a randomized preregistered study 09 February 2026.

Duke University School of Medicine The hidden risks of asking AI for health advice

Canadian Medical Association Can you trust AI for health advice?

BBC News Glue pizza and eat rocks: Google AI search errors go viral

PBS 5 things you should consider before asking an AI chatbot for health advice

PBS Analysis: AI in health care could save lives and money - but not yet

WEF AI in healthcare risks could exclude 5 billion people; here’s what we can do about it. Oct 1, 2025